Robotics 1

Robotics 1

Preface

This website contains my lecture notes from a lecture by Lorenzo Masia from the academic year 2022/2023 (University of Heidelberg). If you find something incorrect/unclear, or would like to contribute, feel free to submit a pull request (or let me know via email).

Acknowledgements

Thanks to Pascal Hansen and Kiryl Kiril for corrections.

Lecture overview

- Introduction [slides] [tutorial – basics]

- Robotics Components [slides] [tutorial – rotations, Grübler]

- Kinematics [slides] [tutorial – Grübler, DH]

- Homogeneous Transformation and DH [slides] [tutorial – DH]

- Inverse Kinematics [slides] [tutorial – DH, Inverse Kinematics]

- Inverse Kinematics (numerical methods) [slides] [tutorial – Geometric Jacobian]

- Differential Kinematics [slides]

- Inverse Differential Kinematics [slides] [tutorial – trajectory planning]

- Trajectory Planning in Joint Space [slides] [tutorial – inverse kinematics]

- Trajectory Planning in Cartesian Space [slides] [tutorial – Grübler, DH]

- Manipulability [slides] [tutorial – Jacobian, Lagrangian]

An excellent resource for more formal and in-depth study is Robot modeling and Control by Mark W. Spong, which the lecture frequently references. The first edition can be downloaded for free, while the second needs to be purchased (or downloaded too, if you know where to look).

Robotics Components

Definition (link/member): individual bodies making up a mechanism

Definition (joint): connection between multiple links

Definition (kinematic pair): two links in contact such that it limits their relative movement

- low-order kinematic pairs: point of contact is a surface (eg. revolute/prismatic/spherical)

- high-order kinematic pairs: point of contact is a dot/line (eg. gears)

Definition (kinematic chain): assembly of links connected by joints

- is a closed loop (left image) if every link is connected to every other by at least two paths (including the ground – imagine it as a single link), else it’s open (right image)

Degrees of Freedom

This is pretty important and will most likely be on the final, so it is best to practice this. Here are some of the examples from the exercises with my solutions (sorry for my terrible drawing).

Definition (degree of freedom) of a mechanical system is the number of independent parameters that define its configuration. It can be calculated by the Grübler Formula:

where

- DOF of the operating space ( for 2D, for 3D)

- in 2D it’s for orientation and for position

- in 3D it’s for orientation and for position

- number of links

- number of kinematic pairs

- notice that this is not the number of joints – if a joint includes more kinematics pairs, it needs to be split for the equation to work (see coincident joints below)

- degree of constraint of the -th kinematic pair

- eg. a revolute joint in 2D takes away DOF (we can only rotate)

For example, the following has DOF:

If you’re viewing this in dark theme, the links are colored light blue (red in the equation) and the joints are colored yellow (blue in the equation) – sorry!

Definition (coincident joints): when there are more than two kinematic pairs in the same joint

Actuators

Definition (actuator): a mechanical device for moving or controlling something

- DC brushed motor: based on Lorentz’ force law (electromagnetic fields)

- brushed because the metal brush powers the magnets (they’re turning)

- brushless uses the position of the motor to turn on/off currents for specific windings

- usually contains gears reductions to trade torque for speed and sensors to measure the position of the motor (see further)

- since the voltage controls the motor but setting it to a specific value is impractical, pulse width modulation (PWM) is used:

- to measure the position of the motor, optical shaft encoders (incremental/absolute) are used:

Kinematics

- establishment of various coordinate systems to represent the positions and orientations of rigid objects and with transformations among these coordinate systems

- the dimension of the configuration space () must be larger or equal to the dimension of the task space () to ensure the existence of kinematic solutions:

Forward (direct) Kinematics

Definition (forward/direct kinematics): the process of finding the position/orientation of the end-effector given a set of joint parameters .

Body Pose

The pose/frame of a rigid body can be described by its position and orientation (wrt. a frame)

- the position is a vector

- the rotation is an orthonormal matrix with (a determinant of would flip the object, we only want rotation)

- due to orthogonality:

The elementary rotations about each of the axes are the following:

- important to remember for calculating robot kinematics (or just think about what the rotation about an axis is doing to the other two axes, it’s not too hard to remember)

- positive values for the rotation are always counter-clockwise (just like normal rotations)

- for remembering where the minus is, it’s flipped only for

When discussing multiple frames, we use the following notation:

E.g. if we have the same point in three different frames, we know that

For representing any rotation, we use Euler’s ZYZ angles, which composes three rotations (Z, Y, Z):

For the inverse problem (calculating angles from a matrix of numbers), we can do

- if we divide by zero somewhere we get degenerate solutions where we can only get the sum of the angles (one of the problems with Euler angles)

- alternative is RPY angles, which are also three rotations (roll, pitch, yaw) and are just as bad

Denavit & Hartenberg Notation

This is a really important concept and will 99% be on the final, so it is best to practice this. Here is a useful PDF with examples (starting at page 13) where you can practice creating the DH tables and Forward Kinematics matrices.

For relating the base and the end effector, we need to both rotate and translate, so we’ll use homogeneous coordinates (matrix is ), encoding both rotation and translation:

To create the homogeneous coordinates in a standardized way, we use the Denavit & Hartenberg (DH) notation which systematically relates the frames of two consecutive links. We have 4 parameters, each of which relates frame to frame :

| parameter | meaning |

|---|---|

| link length | distance between and along |

| link offset | distance between and along |

| link twist | angle between and around |

| joint angle | angle between and around |

A mnemonic to remember which parameter relates which is that it’s from a to z (i.e. relates s).

Example: anthropomorphic arm (3 revolute joints):

| 1 | ||||

| 2 | ||||

| 3 |

We then get the following transformations:

To get the final transformations, we can multiply the matrices and get

This will yield the entire homogeneous matrix – if we only want position (in this case a homogeneous one with the last element being ), we can only focus on the last row, which can simplify the computation:

Things to keep in mind:

- the axis is always the one we rotate about by

- if the joint is prismatic, axis is instead the one that moves by

- good idea to fill in the variables first and the rest after

Inverse Kinematics

Definition (inverse kinematics): the process of finding a set of joint parameters given the position/orientation of the end-effector .

Definition (redundancy): arises when there are multiple inverse solutions

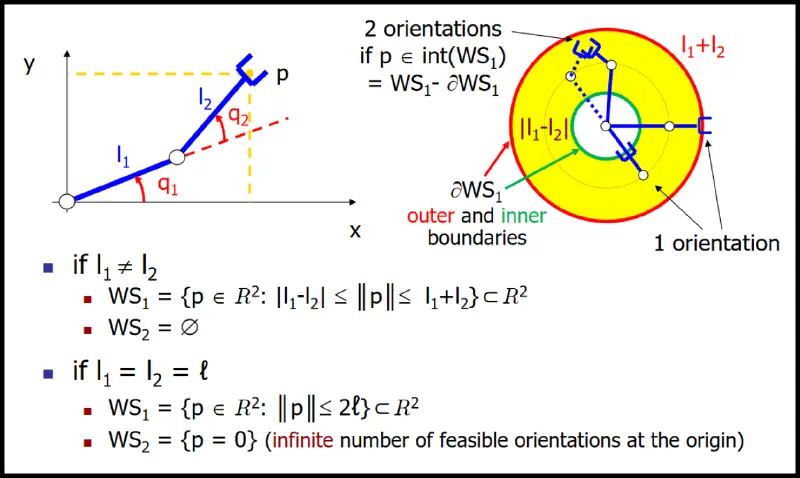

When dealing with inverse kinematics, an important notion are workspaces:

- primary workspace: set of positions that can be reached with at least one orientation

- secondary workspace: set of positions that can be reached with all orientations

Analytical solution (closed form)

- preferred (if it can be found)

- use geometric inspection, solve system of equations

Example: spherical wrist (3 revolute joints):

The matrix is a ZYZ Euler rotation matrix and can be solved as such (see Body pose).

- we use indexes to , because the wrist is usually at an end of another manipulator

For solving various manipulators (SCARA, for example), the law of cosines might also be quite useful:

Definition (decoupling): dividing inverse kinematics problem for two simpler problems, inverse position kinematics and inverse orientation kinematics

- is applicable for manipulators with at least 6 joints where the last 3 intersect at a point

- the general approach is the following:

- calculate the orientations and position where the wrist needs to be

- calculate the orientations of the rest of the robot

Numerical solution (iterative form)

Two major ways of solving it, namely:

Newton method:

- uses Taylor series around point to expand the forward kinematics

- stripping the higher-order terms and solving for , we get

- is nice when , otherwise we have to use the pseudo-inverse

- bad for problems near singularities (becomes unstable)

- note: we’re using the Jacobian as a function here but it’s just a matrix

Gradient method:

- aims to minimize the squared error (the is there for nicer calculations):

- the gradient of is the steepest direction to minimize this error:

- to move in this direction (given the current solution), we do the following:

- should be chosen appropriately (dictates the size of iteration steps)

- we don’t use the inverse but the transpose, which is nice!

- may not converge but never diverges

The methods could also be combined – gradient first (slow but steady), then Newton.

Velocity Kinematics

- relate linear/angular velocities of the end effector to those of the joints

- again determined by the Jacobian matrix [nice overview video]

- can be obtained either geometrically or by time differentiation

- depends on the current configuration of the robot (encodes this information)

- always a matrix:

Two main cases when calculating the geometric Jacobian are the following:

| Prismatic Joint | ||

|---|---|---|

| changes linear velocity (next joint moves linearly) | ||

| doesn’t change the angular velocity | ||

| Angular Joint | ||

| changes linear velocity too – moves next link is the vector from to | ||

| changes angular velocity (next joint rotates linearly) |

Where

- is the orientation of the axis in the th frame and

- is the distance from the center of the th frame to the end-effector

- is the cross product between the vectors , defined like this (just remember 2-3-1):

And they can be calculated from the forward kinematics matrix (namely the DH matrices ):

Example: planar 2D arm:

| 1 | ||||

| 2 |

We now calculate s and s from the DH matrices:

We therefore get

Since , we get the s and, as we see,

The full Jacobian matrix looks like this:

The rank of the matrix is between and , depending on the joint configuration.

Higher-orders

For calculating other differential relations, we can just continue to derivate:

| Relation | Equation |

|---|---|

| velocity | |

| acceleration | |

| jerk | |

| snap |

Inverse Velocity Kinematics

- inverse problem to velocity kinematics

- for square non-singular , we can just do

- has problems:

- is large near singularities

- for redundant robots, we don’t have an inverse (see pseudo-inverse)

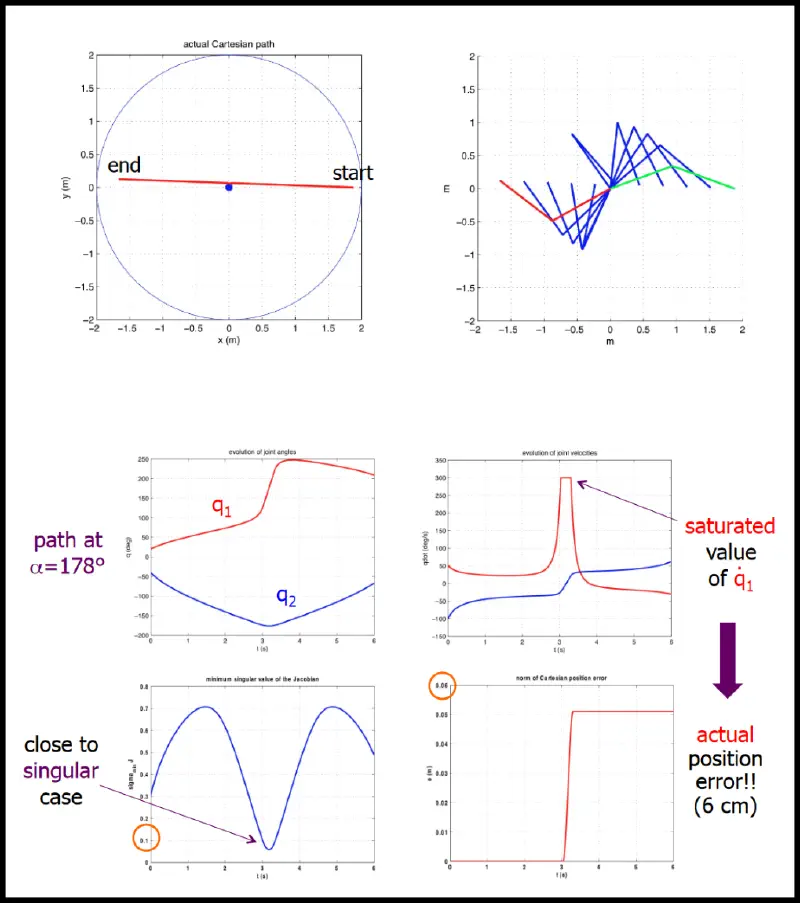

Singularities

In configurations where the Jacobian loses rank, we’re in a kinematic singularity – we cannot find a joint velocity that realizes the desired end-effector velocity in an arbitrary direction of the task space. Close to the singularity, the joint velocities might be very large and exceed the limits.

To remedy this, certain methods exist that balance error and velocity to make the robot movement smoother, like damped least squares, which minimizes the sum of minimum error norm on achieved end-effector velocity and minimum norm of joint velocity.

Pseudo-inverse

In the case that there is no solution to (singularities, the robot simply can’t move that way, etc.), we still want to find a vector that minimizes

This turns out to be the vector , where is the pseudo-inverse:

- can be obtained using SVD and

- satisfies the following conditions:

Force Kinematics

We can again use the Jacobian to relate forces of the end effector to those of the joints.

Given as the vector of infinitesimal joint forces/torques and as the vector of infinitesimal end effector forces/torques. Applying the principle of virtual work (the work of the forces applied to the system are zero: ()), we get

Simplifying, we get

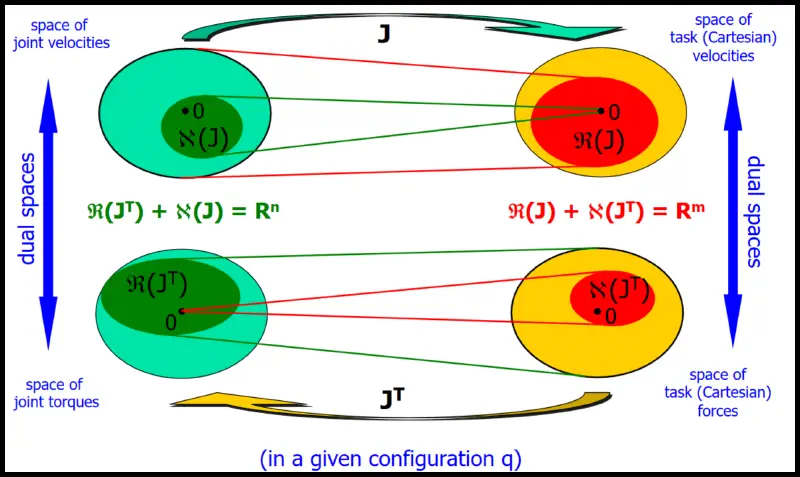

This creates kinetostatic duality of the Jacobian

As a linear algebra reminder:

- : max number of rows/columns of that are linearly independent

- if the rank of is full (typically ), then the robot’s EE can move in any direction; if not, there exists a direction in which the robot cannot move

- : vector subspace generated by all possible linear combinations of columns of

- i.e. is a set of all possible values of where can map vectors

- determines the velocities that EE can reach based on velocities of joints

- : vector subspace generated by all vectors such that

- i.e. is a set of all possible values of where maps to the vector

- is the set of joint velocities that produce no EE velocity

Note that the problems are switched: compare the two equations

- for velocities, it is trivial to calculate the end-effector velocity from joint velocities

- for torques, it is trivial to calculate the joint torques from the end-effector torques

For completeness, here are some properties of the Jacobian and its transpose:

Trajectory Planning

Task: generate reference inputs to the controller when given:

- only start and end defined (minimum requirements)

- sequence of points defined (more difficult)

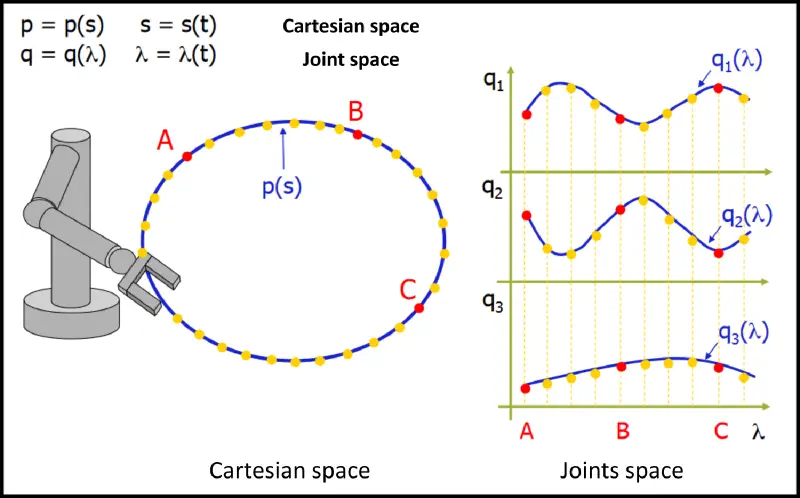

Definition (path): set of points in the joint space which the manipulator has to follow in the execution of the assigned motion (purely geometric).

Definition (trajectory): a path on which a timing is specified (velocities, accelerations, etc.).

- i.e. trajectory = geometric path + time law

- notation is

To plan the trajectory, we do the following:

- get the sequence of pose points (i.e. knots) in the Cartesian space

- create a geometric path linking the knots (interpolation)

- sample the path and transform the sequence to the Joint space

- interpolate in the Joint space

We do this because planning in the Cartesian space can easily move the robot into configurations that can’t be realized, which isn’t the case for the Joint space.

The time law is chosen based on task specification:

- stop at a point, move at constant velocity

- considers constraints and capabilities (max velocity, accelerations)

- may attempt to optimize some metric (min transfer time, min energy, etc.)

In Joint Space

Task: smoothly interpolate between points in the joint space

Cubic polynomial

Useful for interpolating between two positions () given the start and end velocity ():

The cubic polynomial in the normalized form is the following:

Plugging for known values helps us solve the polynomial:

If we don’t care about normalization, the general form is the following:

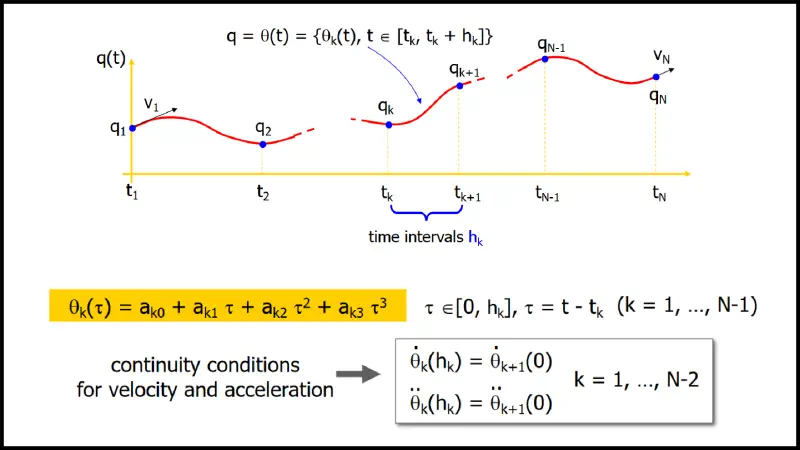

Splines

When we have knots and want continuity up to the second derivative, we can concatenate cubic polynomials, which minimizes the curvature among all interpolating functions (with nd derivatives)

- coefficients

- conditions:

- for the positions of the knots

- for the continuity for first and second derivative at the internal knots

- we have free parameters for assigning

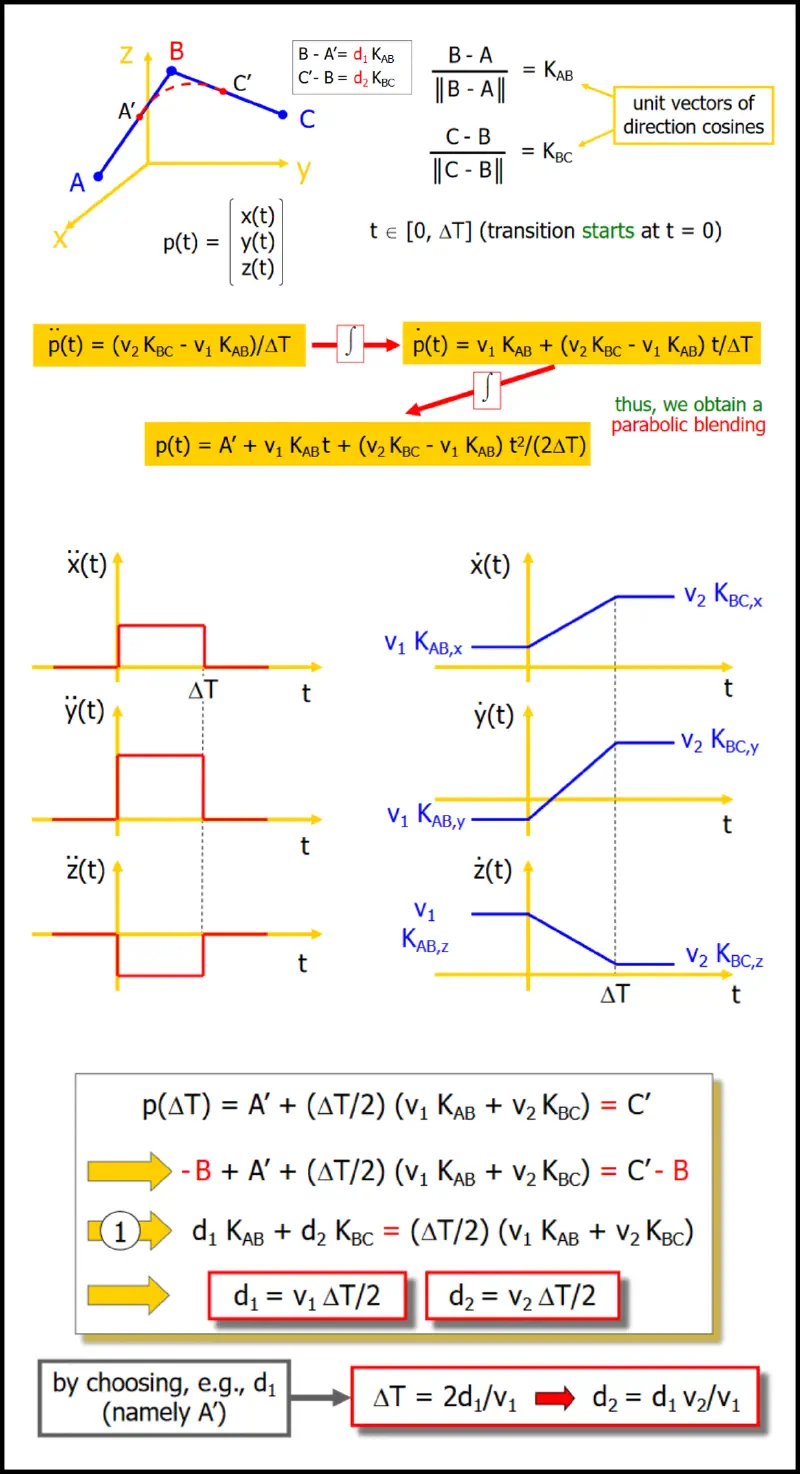

In Cartesian Space

Notation here is (as opposed to ), since we’re planning the position of the end-effector, not the position in the joint space.

Has advantages and disadvantages:

- [+] allows more direct visualization of the generated path

- [+] can account for obstacles + lack of “wandering”

- [-] needs on-line kinematic inversion

When calculating the path:

- for simplicity, position and orientation are considered separately

- the number of knots is typically low (2 or 3), simple interpolating paths are considered

Position

Given and (usually ), we want to analyze the trajectory.

For a linear path from to :

- path parametrization is with

- setting (given the length of the path)

- we can differentiate to get velocity and acceleration:

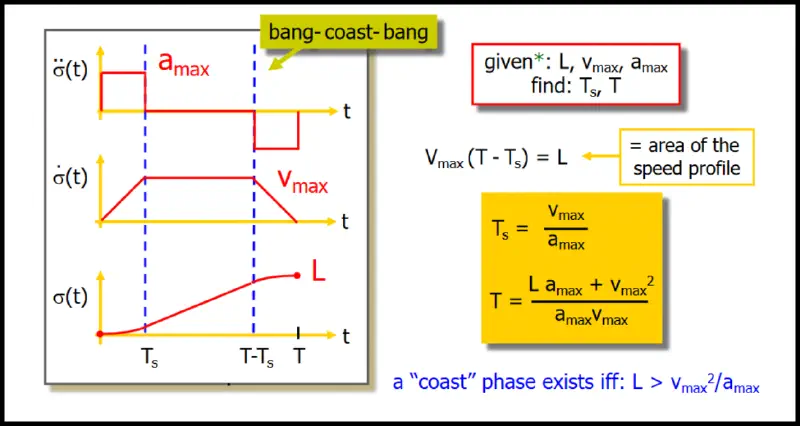

For the motion, we use the trapezoidal speed (bang-coast-bang):

To generalize this (i.e. concatenate the paths), we can over-fly the point in between. A specific example of how this would look like is the following:

Orientation

- use minimal representations (e.g. ZYZ Euler angles) and plan the trajectory independently

- use axis/angle representation:

- determine a neutral axis and an angle by which to rotate

- plan a timing law to interpolate the angle and the axis

Optimal trajectories

For Cartesian robots (PPP):

- straight line joining the two positions is one of those

- can be executed in minimum time under velocity-acceleration constraints

- optimal timing law is the bang-coast-bang

For articulated robots (at least one R):

- and 2. are no longer true in general case, but time-optimality still holds in the joint space

- note that straight lines in joint space don’t correspond to those in Cartesian space!

Uniform time scaling

Say we want to slow a given timing law down or speed it up because of constraints, we don’t need to recalculate all of the motion profiles: velocity scales linearly, acceleration scales quadratically:

Example (max relative violations) with :

Minimum uniform time scaling is therefore

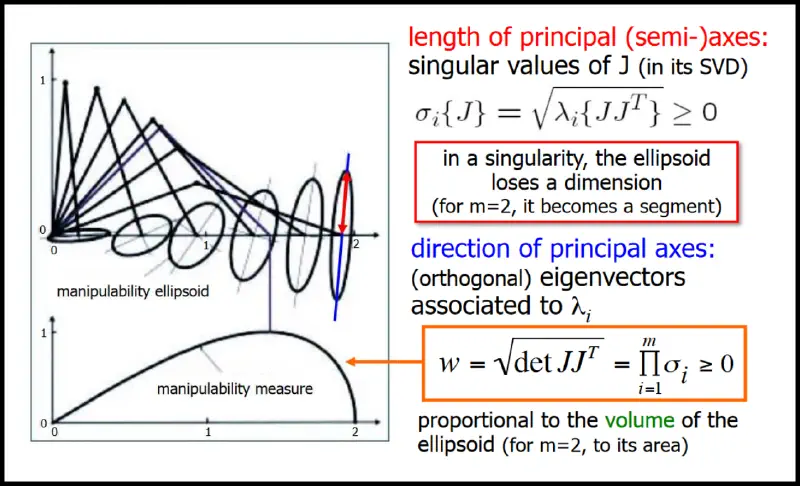

Manipulability

Velocity manipulability

In a given configuration, we want to evaluate how „effective“ is the mechanical transformation between joint velocities and EE velocities (how „easily“ can EE move in various directions of the task space).

- we consider all EE velocities that can be obtained by choosing joint velocities of unit norm:

Force manipulability

We can apply the exact same concept to forces:

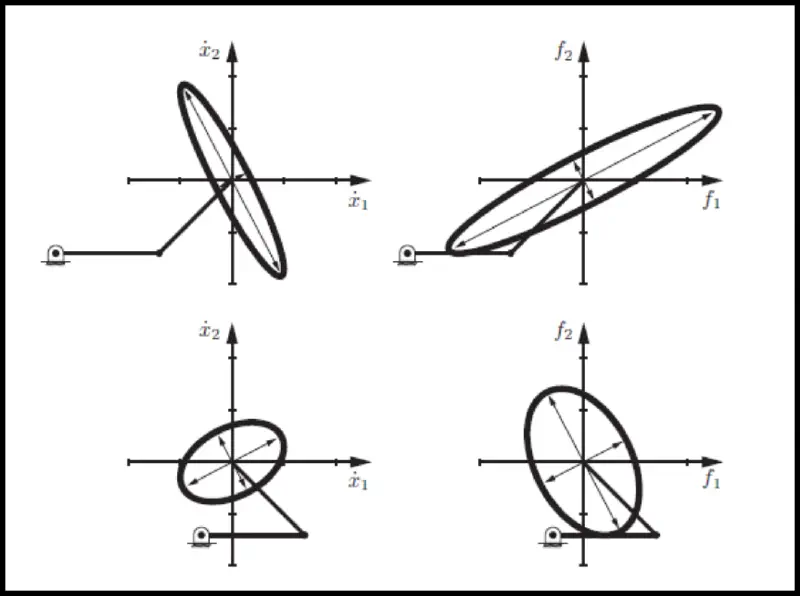

The comparison between the two is interesting – they are orthogonal (velocity left; force right):

The explanation that the teacher gave in class is pretty good: if your arm is almost stretched, it can move very quickly up and down but not too quickly forward/backward. On the other hand (pun not intended), the forces you can apply up/down are not nearly as large as when pushing/pulling.